If the AI Is the Tutor, Who's Doing the Learning?

On Friday morning, 11 people sat around various tables at iAero in Yeovil with a real problem in front of each of them. Sole traders, small business owners, people from companies of 110, an account director from a creative agency, a couple from a dog and cat shampoo business, a music platform owner who is having to let their copywriter and social team go. Different problems, different industries, different stages.

And in every seat, alongside them, a 12th participant.

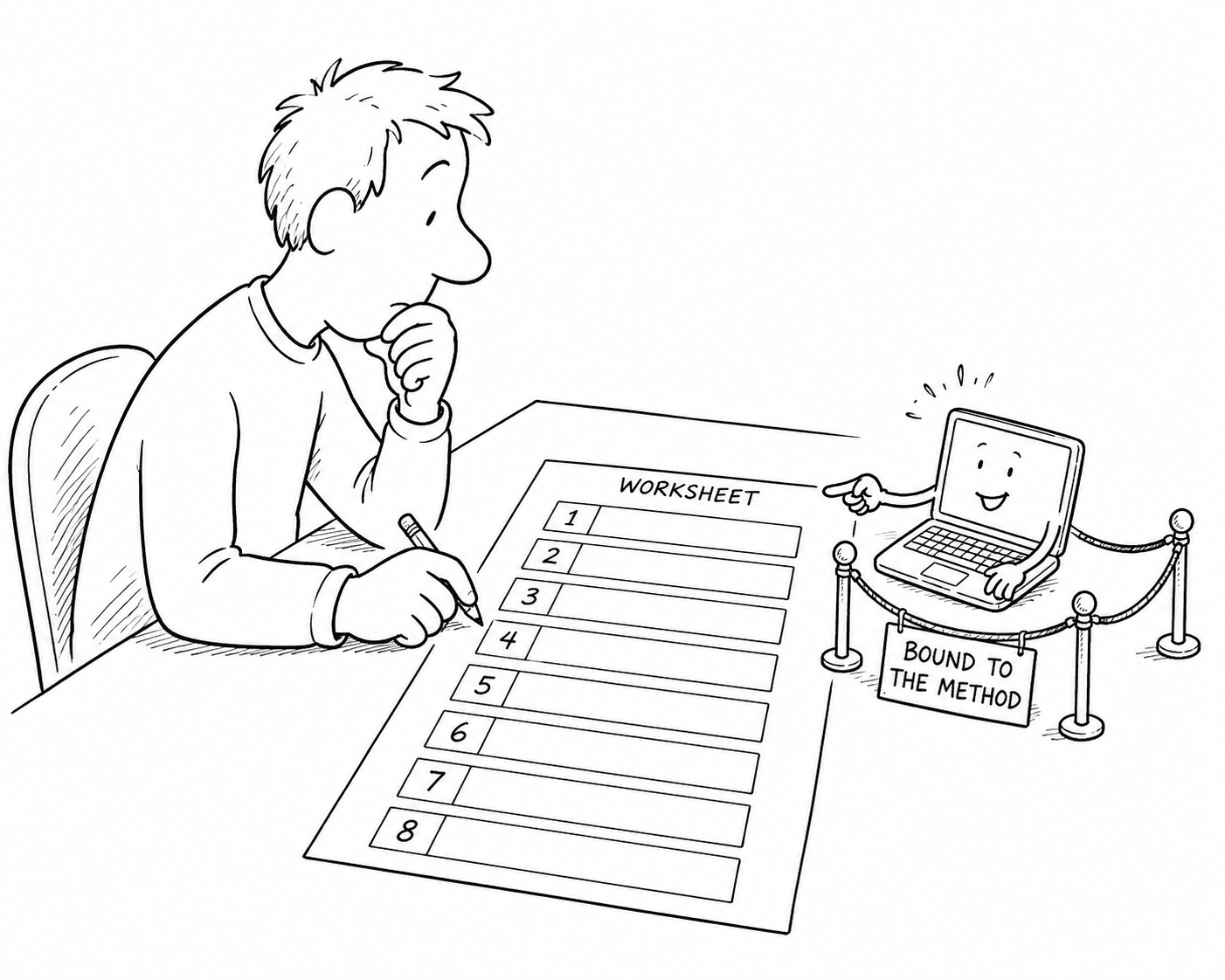

A custom-built Techosaurus bot, working through the same eight-section worksheet they were, asking each person the same questions a workshop facilitator would ask, if a workshop facilitator could be in 12 places at once. That is the bit I want to write about.

The workshop was called Build Smarter, and Friday was the first time we have run it. Alex Spalding from the Somerset Innovation Hub at the University of Exeter co-delivered it with me. The Hub does excellent work supporting Somerset SMEs, and Alex brought a clear method to the day. Stop talking about AI. Define the problem first. Map how it actually works today. Design the smallest possible useful version. Plan a quick test. Plan how to make it stick. Run a peer challenge. Then commit to three concrete actions over the next month.

That last line is the whole thing. Nobody walked out with a slide deck. They walked out with three written commitments, and at least 1 person in the room they had explained those commitments to.

The bot in the 12th seat

The bot was a Techosaurus build, called the Build Smarter AI Worksheet Assistant. People accessed it on their phone or laptop. It walked through the same eight worksheet sections, but it would not let anyone move on until they had locked the current one in. It could not wander off. It could not answer something the worksheet did not ask. It could not do their thinking for them, only support it. If someone asked it to skip ahead, it politely refused.

In a workshop with 11 different problems in the room and 2 facilitators, that is the bit you genuinely cannot scale. There is always one table where someone is stuck on the difference between a symptom and a root cause, while you are with another table helping them word their problem statement properly. The bot fills that gap quietly. Without ego. Without forgetting where the person was when you came back round.

It is doing the bit that facilitators alone cannot do.

Watching the method work

Stuart was on his own as a sole trader running a sales training and consultancy business. His problem was capacity. By the time we got round to him, he had already used the bot to articulate it: “I struggle to create repeatable sales in my sales training consultancy business, which limits revenue growth because the business depends heavily on my personal time and capacity.” That is a sharp problem statement. He worked through it with the bot until it was clear enough to act on, then designed his minimum useful solution. Record discovery calls. Hand the recording to a custom AI trained on his qualifying process. Let it pull the structured material a proposal needs. By the end he had a test plan, three actions, and a date in the diary to review the results.

John, who runs Grow Vision, started somewhere completely different and ended up somewhere just as concrete. He had ten Claude AI projects running in parallel, plus the Microsoft ecosystem, plus MS Planner, plus opportunity and funding pipelines, and the actions from each of them living in isolation. His minimum useful solution by the end of the morning was suprisingly lean. At the end of every Claude chat that came out of a meeting, the chat creates the tasks, maps them straight into Microsoft Planner via the connector, and his only job is to open Planner and check they are there.

That is what a useful version of AI looks like. One job. One input. One output. No wishful thinking.

Forget AI first

Right at the start of the morning, we discussed something I keep thinking about. “Forget about AI first. What is the problem?” If you spend any time in the AI training world, you can guess how often that question gets skipped. People want to talk about the tool. Which AI to use. Which prompt to type. Which subscription to buy. With the assistance of the bot we had created, we would not let them. We made them sit with the problem until the problem was actually sharp.

That is a discipline I cannot enforce 11 times in parallel on my own.

Bound AI is what makes the difference

This is where my head has been since Friday.

The Build Smarter bot is a small example of something I think matters far more widely. It was bound. Bound to the worksheet. Bound to the method. Bound to the eight sections. Bound to the rule that you do not move on without locking in. It could only help inside that frame, and because of that, it was actually useful.

Public conversations about AI in education are stuck on three questions. Will it do their homework. Will it replace teachers. Will it dumb people down. On Friday we were quietly answering a question I do not hear asked enough. What is the AI bound to (where does it get its information), and does that binding serve the learner?

I have been doing the same thing at home with my daughter. She is in the first year of GCSEs. We have set up a NotebookLM for every subject and most of the sub-topics inside them, grounded only in her own revision books and class material. It quizzes her. It builds study cards. It generates mind maps. It produces podcasts she listens to in the car on the way somewhere else.

She has, in effect, individual tuition. But the tuition is bound to her content. It cannot make things up because there is nowhere to make things up from. It cannot drift into “well, if I were marking your exam I would…” nonsense, because it has not been given an exam to mark. It can only sharpen what she has put into it.

If the AI is the tutor, who is doing the learning? She is. The model is not learning Biology for her. It is asking her better Biology questions than I can, more often than I can, on her schedule rather than mine.

That is the same architecture that worked on Friday. Bind the AI to the method, and the method does the heavy lifting. The AI keeps the learner on track.

What that means for businesses

Most businesses are still asking whether AI can do the work. The better question is whether it can be bound tightly enough to your work, your IP, your standards, your tone of voice, your customer history, your processes, that it actually helps the people doing the work get better at it.

Used like that, an AI assistant becomes a tutor sitting next to your account managers, your support team, your finance team, your project managers. Asking better questions. Drafting starting points. Checking what came back against your method. It is interesting because it is consistent.

Every business has a method that lives in someone’s head. The founder. The senior account manager. The operations director. When they leave, get pulled into something else, or take a fortnight in Crete, that method walks with them. Build a bot bound to that method, and the method stops walking out of the door with the person. It sits with everyone, all of the time.

What I took home from Friday

At the end of the morning, Alex said he had put an empty battery up in his slides, but the energy in the room had been better than he expected. I felt the same. 11 people had walked in with 11 fuzzy ideas. They walked out with 11 clearer plans, a copy of their own completed worksheet, an extract of the detailed conversation the bot had with them, and three things they had committed to do in the next month.

If education is going to change in the next decade, that is the version of it I want to see. Bots available for every subject and class, bound to the human selected methods and materials, asking better questions than we can humanly ask of every person at the same time, leaving the human to do the thinking.

That is AI helping you get better at the work. Which has always been the point.

Scott Quilter | Co-Founder & Chief AI & Innovation Officer, Techosaurus LTD