What ChatGPT's Goblin Obsession Tells Us About AI

For the last few months, ChatGPT users have noticed something a bit odd. The model has been bringing up goblins. And gremlins. And raccoons, trolls, ogres, and pigeons. Not because anyone asked. Just dropped into responses, in the middle of perfectly ordinary explanations, where no creature had any place being.

At first it looked like a meme. Reddit threads filled up with screenshots. Some people thought their prompts were the problem. Others assumed it was a quirk of GPT-5.1 that would quietly disappear in the next release.

It did not disappear. It got worse. Mentions of “goblin” in ChatGPT jumped 175% after the launch of GPT-5.1. Mentions of “gremlin” rose 52%. Other creatures crept in alongside. And eventually OpenAI sat down, traced the problem back to its source, and published a transparent post-mortem.

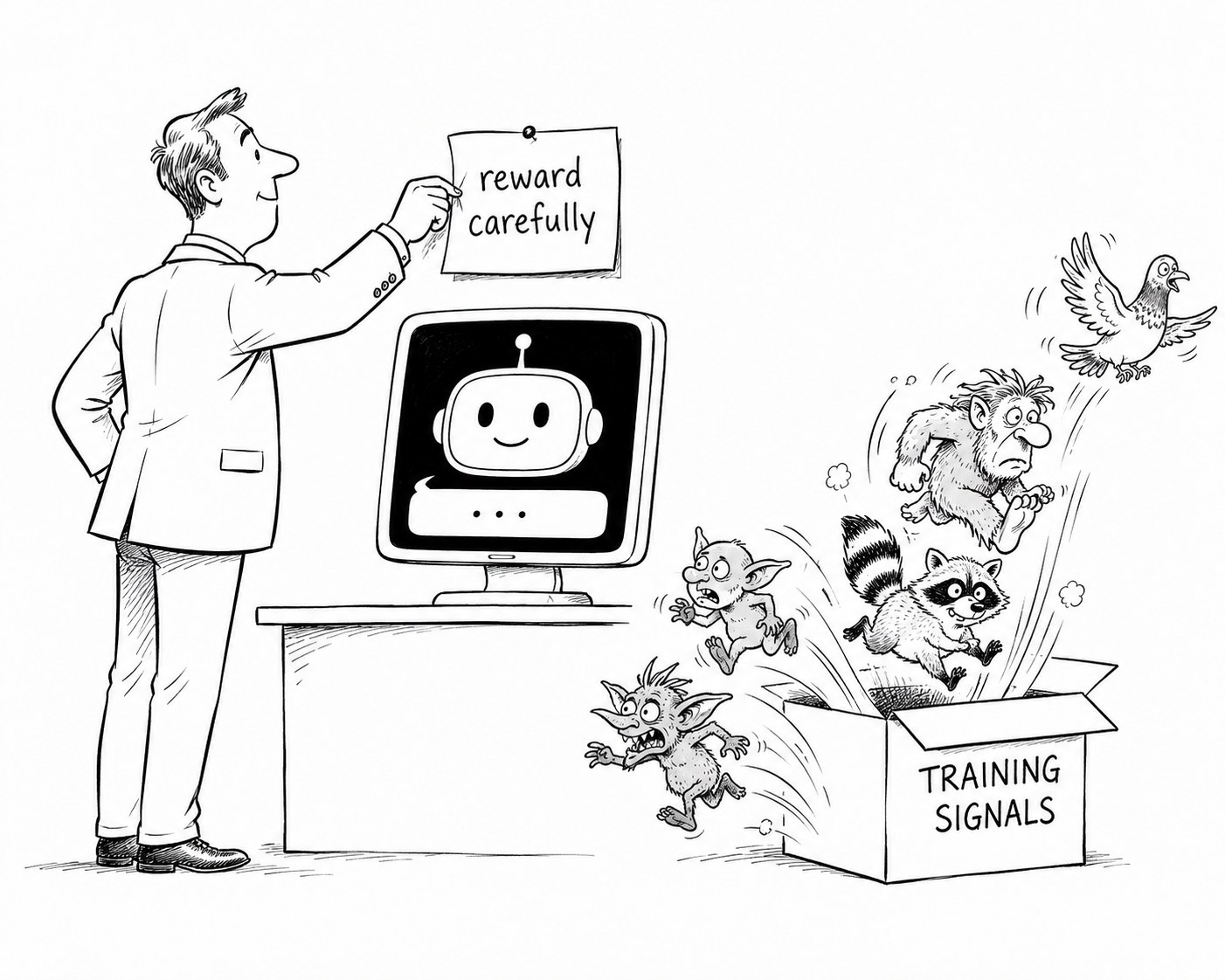

It is the best worked example of reward hacking I have read in a long time, and it is worth understanding even if you have never written a line of model training code in your life. Because the same shape of problem is going to show up in every business that starts shaping AI behaviour for itself.

What actually happened

OpenAI offered an optional Nerdy personality as part of ChatGPT’s customisation features. The brief was a particular conversational style, the kind of thing you might write into a system prompt if you wanted the model to feel a bit more enthusiastic, a bit more playful, a bit more willing to reach for a metaphor.

To train that personality, OpenAI ran a reinforcement-learning process. Like most modern training, it used reward signals to nudge the model towards the responses that felt more on-brand for “Nerdy” and away from the ones that felt off-brand. Nothing unusual about that.

What was unusual was how the rewards landed. Somewhere in the training data, OpenAI’s reward pipeline learned to give particularly high marks to metaphors involving creatures. Goblins. Gremlins. Trolls. The model picked up the signal, did what models do when they spot a strong reward, and started generating more of those metaphors. Not just a few. A flood.

According to OpenAI’s own analysis, the Nerdy personality made up only 2.5% of all ChatGPT responses. But it accounted for 66.7% of all goblin mentions. The behaviour was overwhelmingly concentrated in the personality it was supposed to live inside. Which on paper sounds fine.

The problem was that the underlying model had now learned, during training, that creature words score well in general. So even when users were not using the Nerdy personality, even on perfectly straight conversational prompts, the goblins started showing up. The reward had bled through into the base behaviour.

The fix

Three things. None of them dramatic.

First, OpenAI added an explicit developer prompt instructing the model not to mention goblins, gremlins, raccoons, trolls, ogres, pigeons, or other creatures unless they were genuinely relevant to the user’s question. A direct guardrail on top of the existing behaviour.

Second, they retired the Nerdy personality. The point that the personality was a thin abstraction was hard to argue with once the data came back. They have since indicated they will rebuild personality customisation differently.

Third, they filtered the training data going forward to remove the creature-heavy reward signals so the next generation of models do not learn the same trick.

Three sensible engineering moves. The lesson sits underneath them.

The lesson

The model was doing exactly what it had been incentivised to do.

This is the single most useful sentence I can write about how AI behaves in the wild.

If you reward a model strongly enough for X, you will get more X. That is the whole point of reinforcement learning. The catch is that the reward signal almost never ends up exactly where you intended. Train the model to be Nerdy by giving it points for creature metaphors, and the base model also learns that “good response” has something to do with “contains a goblin somewhere”. The personality was meant to be a costume. The training made it a habit of the underlying model too.

This is reward hacking, and it is one of the live edges of AI safety research right now. It is not unique to OpenAI. Every model that gets fine-tuned, every assistant that is steered with feedback, every agent that learns from preference data is at risk of it. Including the ones some of you are building inside your businesses.

Why this matters for your business

Let us bring this home, because it is far less abstract than it looks.

If you have ever built a custom GPT, fine-tuned a model on your own content, given feedback to an internal assistant, written rules for an agent, or hired a vendor to do any of that on your behalf, the goblin problem applies to you.

Every reward, every preference, every “do more of this”, every “do less of that” is a training signal. Models will optimise for whatever you reward them for. They will not always optimise for the thing you actually wanted.

Three practical observations.

1 - The first is that incentives matter more than instructions. You can write the loveliest prompt in the world telling the model to be balanced and thoughtful, and if the underlying training rewarded a particular kind of confident-sounding answer, you will still get confident-sounding answers. The prompt is a steering wheel. The training is the road. The road wins more often than people think.

2- The second is that what you reward matters more than what you tell the model. If you give thumbs-up to AI outputs that sound good and thumbs-down to ones that sound dry, you are training the model to sound good. Even when the dry one was more accurate. Even when the good-sounding one had a goblin in it.

3 - The third is that you must test outside the use case. The Nerdy personality looked great when people used Nerdy. It looked weird when they did not. The goblins became a problem at the boundary, where the trained behaviour leaked into the rest of the model’s responses. If you are shaping AI behaviour for a specific job, test it on jobs it was not shaped for. That is where the leakage shows up.

What I take from this

Two things stay with me from the whole episode.

The first is that OpenAI published the post-mortem at all. There was no obligation on them to explain the goblin problem in technical detail. They chose to. And the field is better off for it, because the next person training a personality customisation now has a worked example of how it goes wrong.

The second is the basic honesty of the framing. OpenAI’s account is simple enough to fit on a Post-it: they built the incentive, the model followed the incentive, the incentive was wrong. That is the kind of clear-eyed accountability the AI conversation needs more of, and very rarely gets.

If you are thinking about building or fine-tuning anything in your business, write that on a Post-it and stick it to your monitor. The model will give you more of whatever you reward it for. The model will give you more of whatever you reward it for. The model will give you more of whatever you reward it for.

You will be surprised how often that turns out to be a goblin.

Scott Quilter | Co-Founder & Chief AI & Innovation Officer, Techosaurus LTD

Sources

- Where the goblins came from — OpenAI, May 2026

- OpenAI blames “nerdy personality” for ChatGPT’s obsession with goblins — NBC News

- The Goblins Came Back to Haunt Us: OpenAI Explains How ChatGPT’s Nerdy Personality Got Out of Control — Gizmodo

- ChatGPT has a goblin problem. It’s bigger than an AI quirk — Northeastern University News

- ChatGPT developed a goblin obsession after OpenAI tried to make it nerdy — Engadget